PanoDepth

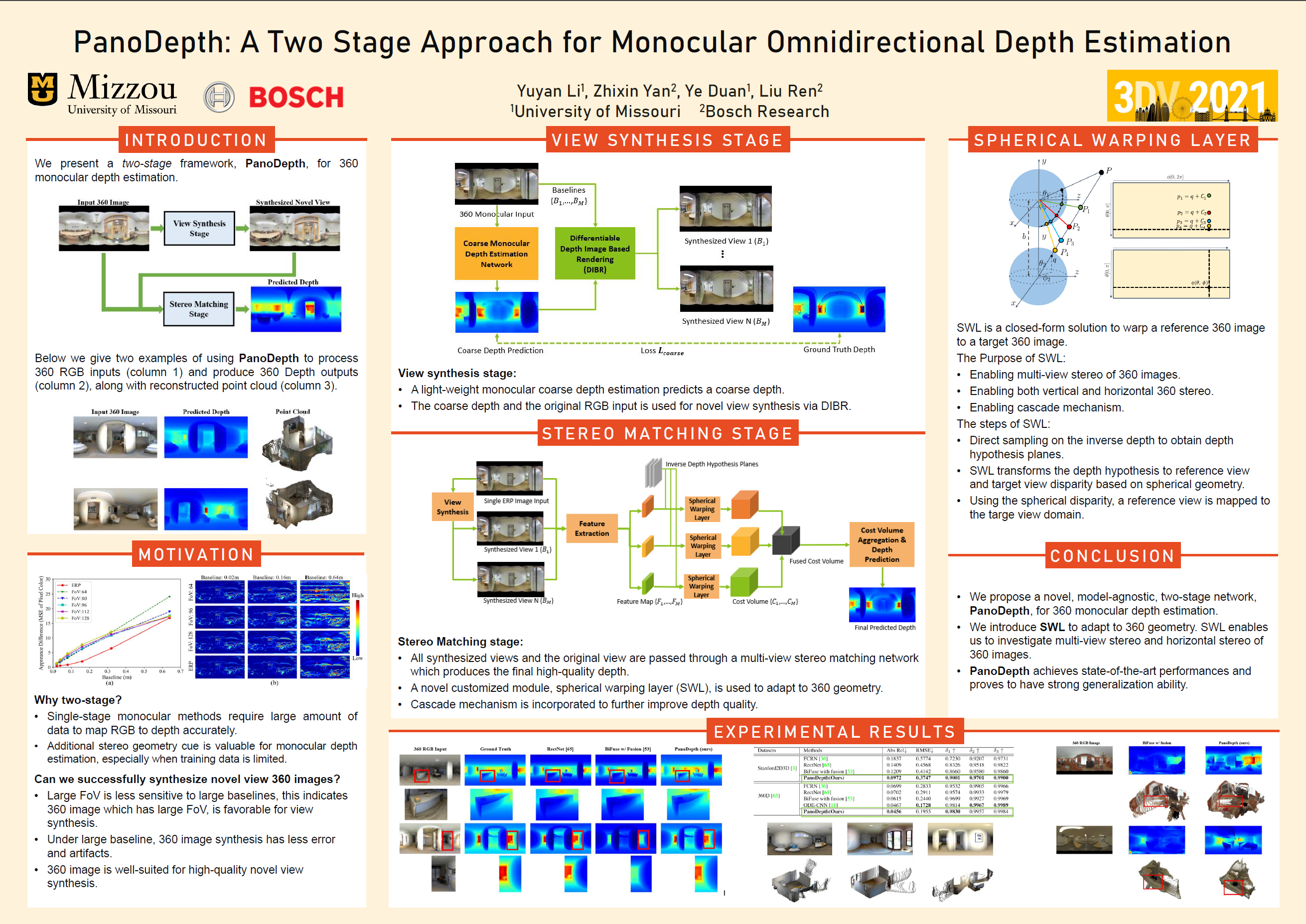

A Two Stage Approach for Monocular Omnidirectional Depth Estimation

Abstract

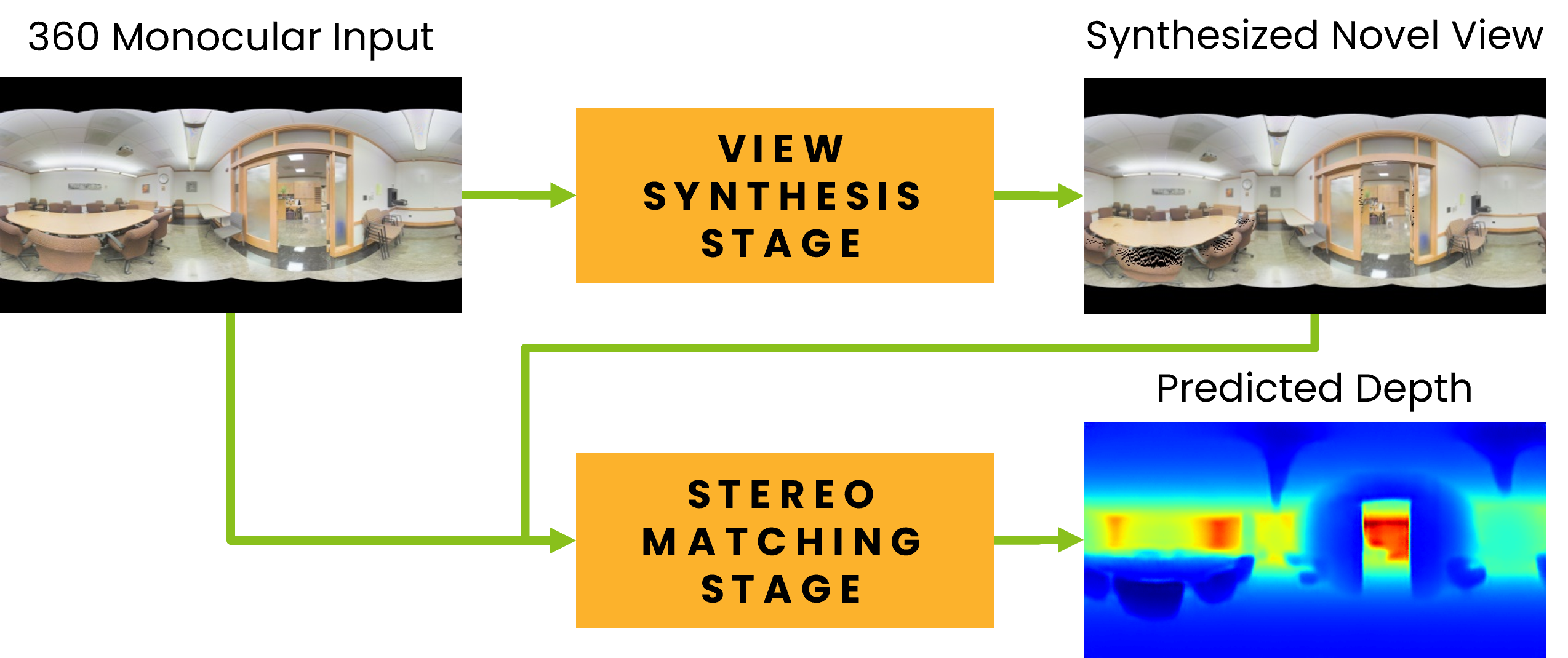

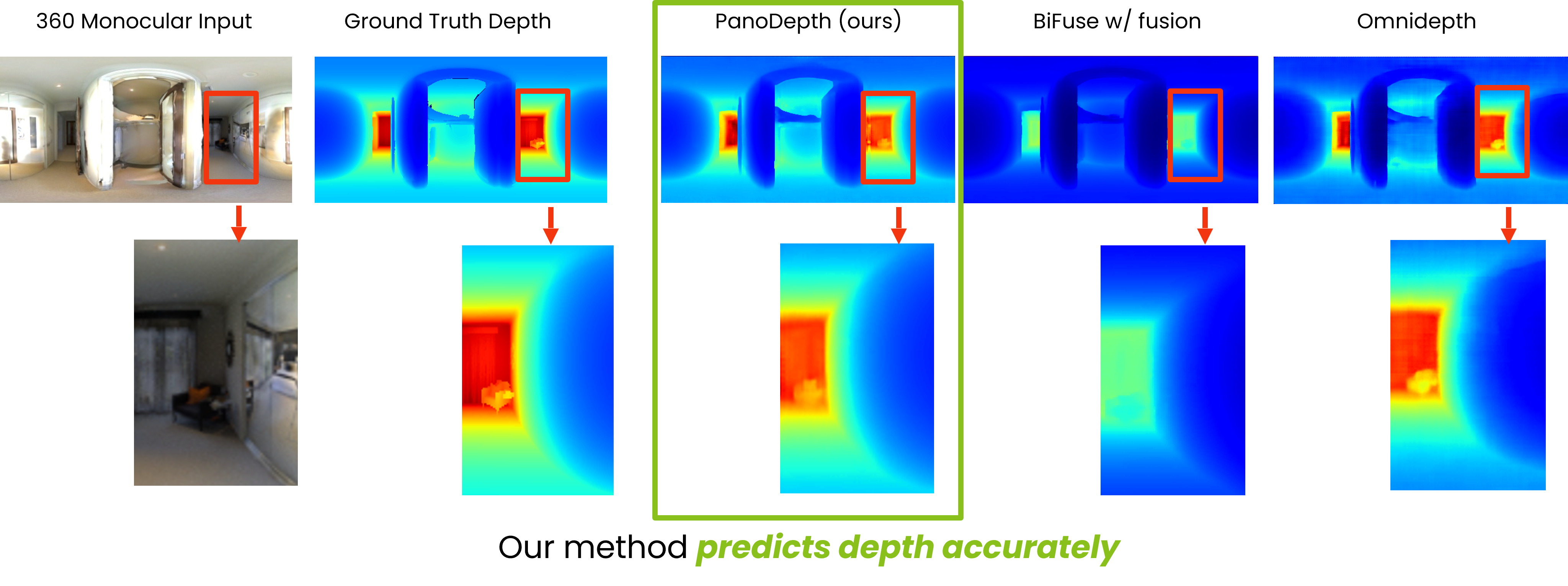

Omnidirectional 3D information is essential for a wide range of applications such as Virtual Reality, Autonomous Driving, Robotics, etc. In this paper, we propose a novel, model-agnostic, two-stage pipeline for omnidirectional monocular depth estimation. Our proposed framework PanoDepth takes one 360 image as input, produces one or more synthesized views in the first stage, and feeds the original image and the synthesized images into the subsequent stereo matching stage. In the second stage, we propose a differentiable Spherical Warping Layer to handle omnidirectional stereo geometry efficiently and effectively. By utilizing the explicit stereo-based geometric constraints in the stereo matching stage, PanoDepth can generate dense high-quality depth. We conducted extensive experiments and ablation studies to evaluate PanoDepth with both the full pipeline as well as the individual modules in each stage. Our results show that PanoDepth outperforms the state-of-the-art approaches by a large margin for 360 monocular depth estimation.

Cite

@inproceedings{li2021panodepth,

author={Li, Yuyan and Yan, Zhixin and Duan, Ye and Ren, Liu},

booktitle={2021 International Conference on 3D Vision (3DV)},

title={PanoDepth: A Two-Stage Approach for Monocular Omnidirectional Depth Estimation},

year={2021},

volume={},

number={},

pages={648-658},

doi={10.1109/3DV53792.2021.00074}

}